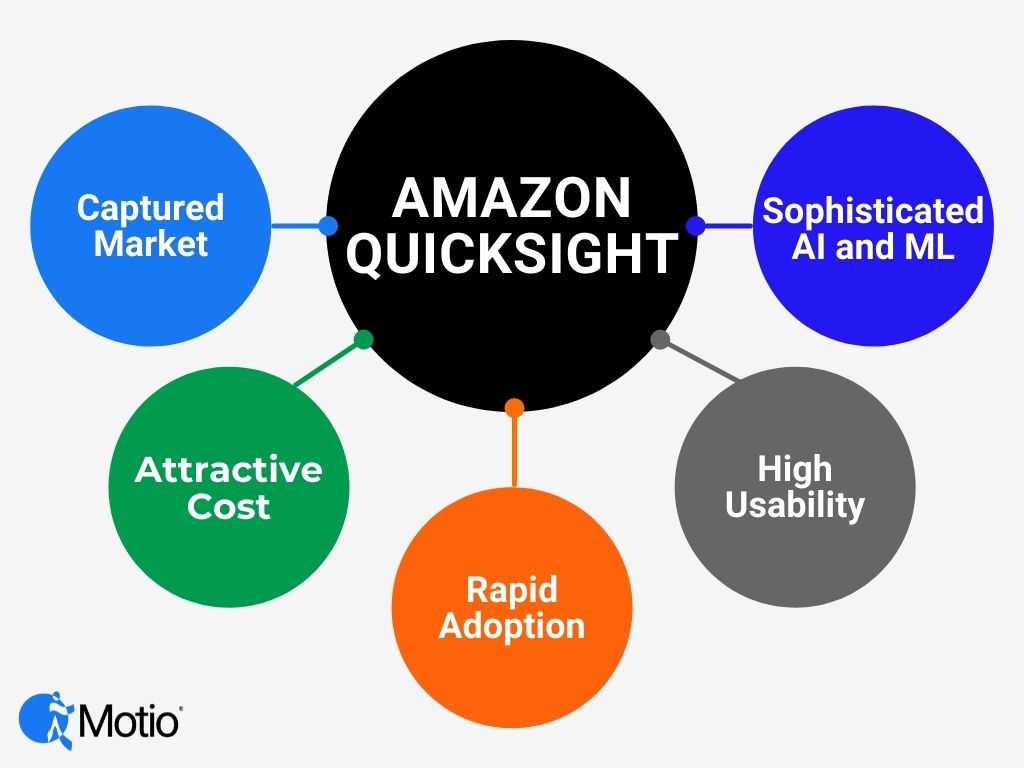

That’s a bold statement, sure, but in our analysis, QuickSight has all of the qualities to increase market penetration. QuickSight was introduced by Amazon in 2015 as an entrant in the business intelligence, analytics and visualization space. It first appeared in Gartner’s Magic Quadrant in 2019, 2020 was a no-show, and was added back in 2021. We have watched as Amazon has developed the application organically and has resisted the temptation to purchase the technology as other large technology companies have done.

We Predict QuickSight will Outperform Competitors

We expect QuickSight to overtake Tableau, PowerBI and Qlik in the leaders quadrant in the next couple of years. There are five key reasons.

- Built-in market. Integrated into Amazon’s AWS who owns a third of the cloud market and is the largest cloud provider in the world.

- Sophisticated AI and ML tools available. Strong in augmented analytics. It does what it does well. It does not try to be both an analytics tool and a reporting tool.

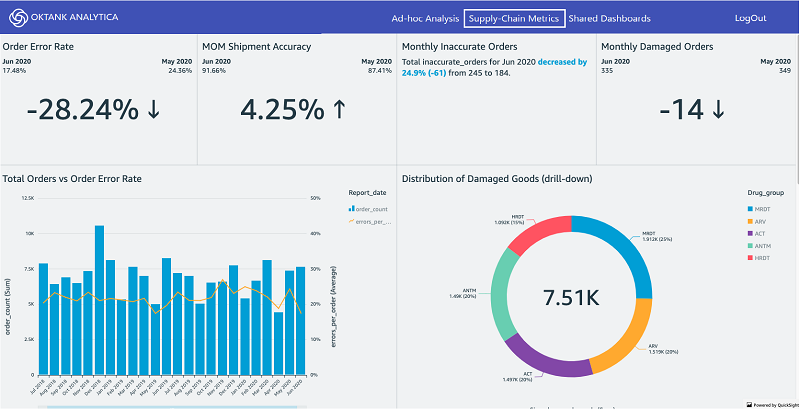

- Usability. The application itself is intuitive and easy to use to create ad hoc analysis and dashboards. QuickSight has already adapted its solutions to customer needs.

- Adoption. Rapid adoption and time to insight. It can be quickly provisioned.

- Economics. Cost scales to usage like the cloud itself.

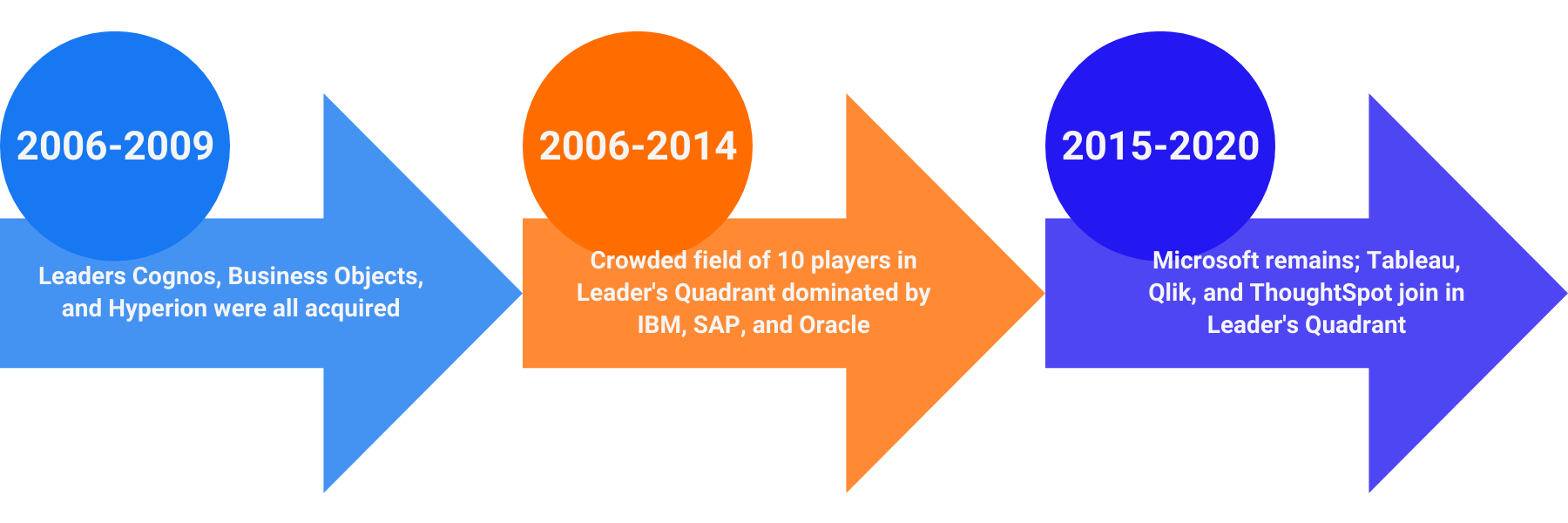

Constant Changing of the Frontrunner

In an exciting horse race, leaders change. The same can be said of the leaders in the Analytics and Business Intelligence space over the past 15 – 20 years. In reviewing Gartner’s BI Magic Quadrant over the past years we see that it is hard to maintain top spot and some of the names have changed.

To oversimplify, if we assume that Gartner’s BI Magic Quadrant represents the market, the marketplace has rewarded the vendors who have listened and adapted to the changing requirements of the marketplace. That is one of the reasons that QuickSight is on our radar.

What QuickSight does well

- Rapid deployment

- Programmatically onboard users.

- In Gartner’s Solution Scorecard for AWS Cloud Analytical Data Stores the strongest category is Deployment.

- Ease of product administration and installation and scalability receive high scores from Dresner in their Advisory Services 2020 report.

- Can scale to hundreds of thousands of users without any server setup or management.

- Serverless Scale to Tens of Thousands of Users

- Inexpensive

- On par with Microsoft’s PowerBI and significantly lower than Tableau, low author annual subscription plus $0.30/30 minute pay-per-session with a cap of $60/year)

- No per-user fees. Less than half the cost of other vendors’ per user licensing.

- Auto-scaling

- Uniqueness

- Built for the cloud from the ground up.

- Performance is optimized for the cloud. SPICE, internal storage for QuickSight, holds a snapshot of your data. In the Gartner Magic Quadrant for Cloud Database Management Systems, Amazon is recognized as a strong leader.

- Visualizations are on par with Tableau and Qlik and ThoughtSpot

- Easy-to-use. Uses AI to automatically infer data types and relationships to generate analysis and visualizations.

- Integration with other AWS Services. Built-in natural language queries, machine learning capabilities. Users can leverage the use of ML models built in Amazon SageMaker, no coding necessary. All users need to do is connect a data source (S3, Redshift, Athena, RDS, etc.) and select which SageMaker model to use for their prediction.

- Performance and reliability

-

-

-

- Optimized for cloud, as mentioned above.

- Amazon scores highest in reliability of product technology in Dresner’s Advisory Services 2020 report.

-

-

Additional Strengths

There are a couple of other reasons why we see QuickSight as a strong contender. These are less tangible, but just as important.

- Leadership. Mid-2021, Amazon announced that Adam Selipsky, former AWS executive and current head of Salesforce Tableau will run AWS. In late 2020, Greg Adams, joined AWS as Director Of Engineering, Analytics & AI. He was a nearly 25-year veteran of IBM and Cognos Analytics and Business Intelligence. His most recent role was as IBM’s Vice President Development who led the Cognos Analytics development team. Prior to that he was Chief Architect Watson Analytics Authoring. Both are excellent additions to the AWS leadership team who come with a wealth of experience and an intimate knowledge of the competition.

- Focus. Amazon has concentrated on developing QuickSight from the ground up rather than buying the technology from a smaller company. They have avoided the “me too” trap of having to have all competitive features at any cost or regardless of quality.

Differentiation

Visualization which was a differentiating factor just a few years ago, is table stakes today. All major vendors offer sophisticated visualizations in their analytics BI packages. Today, the differentiating factors include, what Gartner terms augmented analytics such as natural language querying, machine learning and artificial intelligence. QuickSight leverages Amazon’s QuickSight Q, a machine learning powered tool.

Potential Downsides

There are a few things that work against QuickSight..

- Limited functionality and business applications especially for data preparation and management

- Biggest objection stems from the fact that it cannot connect directly to some data sources. That hasn’t seemed to hinder the dominance of Excel in its space where users just move the data. Gartner agrees, noting that “AWS analytical data stores can be used either solely or as part of the hybrid and multi-cloud strategy to deliver a complete, end-to-end analytics deployment.”

- Works only on Amazon’s SPICE database in AWS cloud, but they do own 32% of the cloud market share

QuickSight Plus

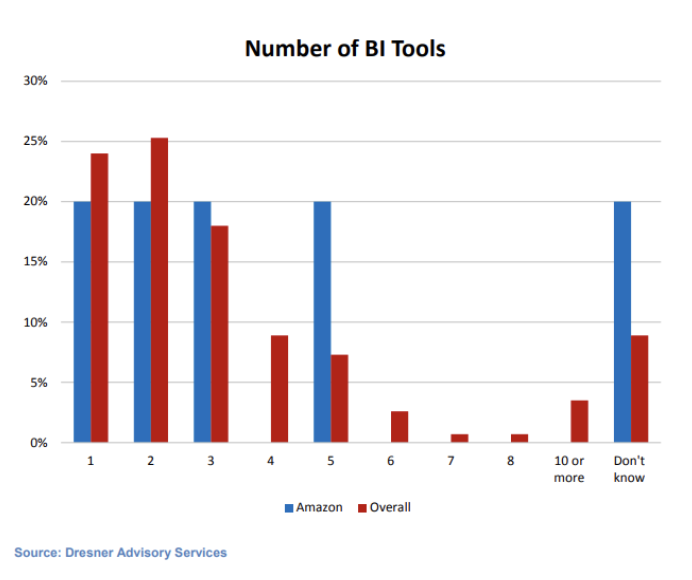

We see another trend in the BI marketplace in the use of analytics and Business Intelligence tools within organizations that will benefit the adoption of QuickSight. Ten years ago, businesses would tend to purchase an enterprise-wide BI tool as a standard for the organization. Recent research by Dresner supports this. In their study, 60% of Amazon QuickSight organizations use more than one tool. Fully 20% of Amazon users report the use of five BI tools. It looks like the users adopting QuickSight may not necessarily be abandoning their existing tools. We predict that organizations will adopt QuickSight in addition to their existing Analytics and BI tools based on the tools’ strengths and the organization’s need.

Sweet Spot

Even if your data is on premises or another vendor’s cloud, it might make sense to move the data you want to analyze to AWS and point QuickSight at it.

- Anyone who needs a stable, fully managed cloud-based analytics and BI service that can provide ad hoc analysis and interactive dashboards.

- Clients who are already in the AWS cloud but don’t have a BI tool.

- POC BI tool for new applications

QuickSight may be a niche player, but it will own its niche. Look for QuickSight in Gartner’s leaders quadrant as early as next year. Then, by 2024 – because of its strengths and organizations adopting multiple Analytics and BI tools – we see 60-80% of Fortune 500 companies adopting Amazon QuickSight as one of their key analytic tools.