How engineering discipline, governance, and operationalization redefine analytics maturity

The analytics maturity model functioned pretty much as the industry’s standard 10 or 15 years ago. Gartner recommended it. Consultants monetized it. Executives read about in the airline-seat-back magazines.

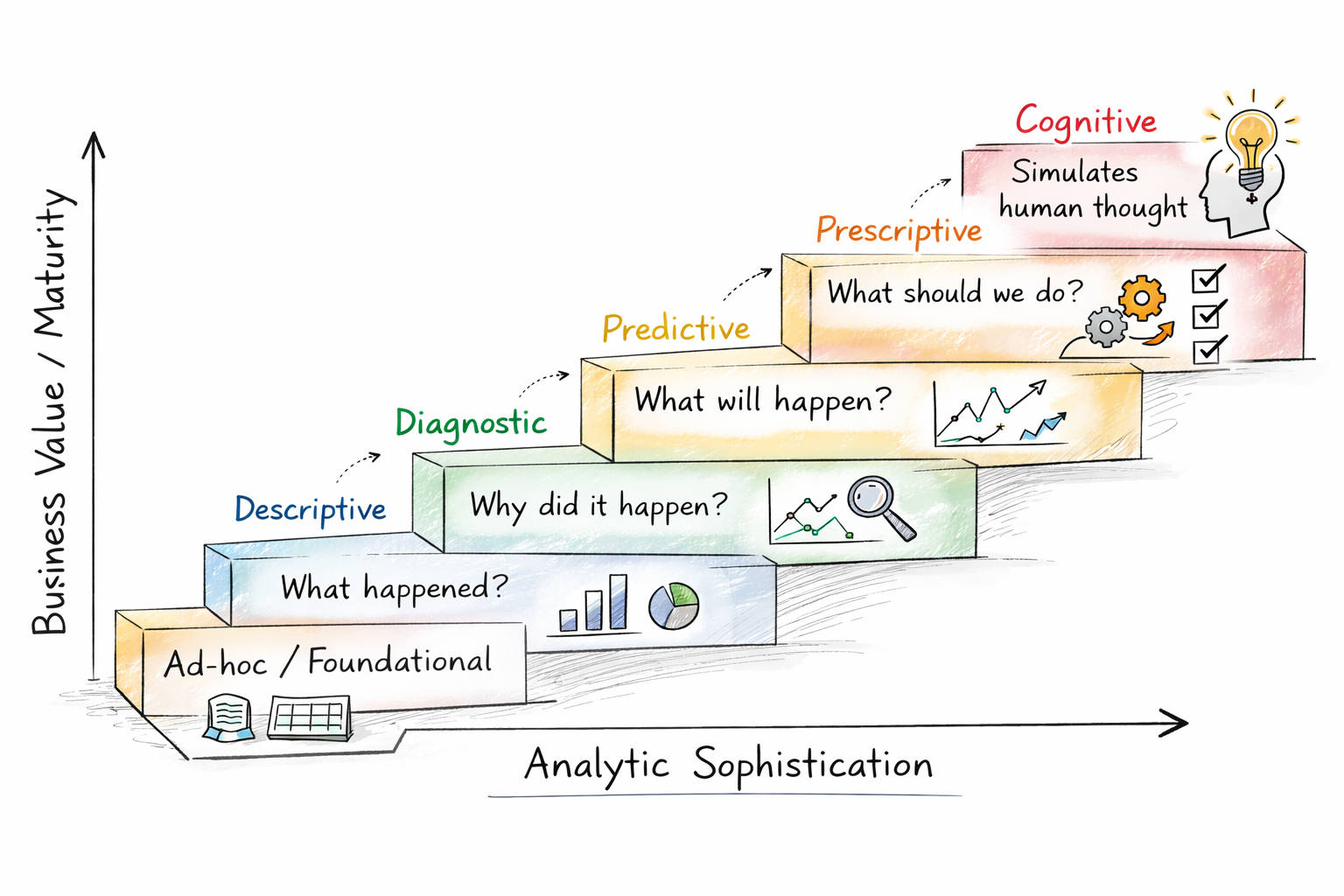

The analytics staircase was simple: Descriptive → Diagnostic → Predictive → Prescriptive → Cognitive.  I’m not going to spend the time defining them here. You know them. You start with spreadsheets, and the organization is fully mature when AI is implemented. Checklists helped you evaluate which step you were on. Each platform justified new tooling and new investment. We were told that each step unlocks additional value in the business case.

I’m not going to spend the time defining them here. You know them. You start with spreadsheets, and the organization is fully mature when AI is implemented. Checklists helped you evaluate which step you were on. Each platform justified new tooling and new investment. We were told that each step unlocks additional value in the business case.

Unfortunately, the staircase described only one dimension of maturity – analytic capability. It said nothing about whether the organization could deploy, govern, or sustain what it built.

To understand why, you have to step back from the staircase and watch the governance pendulum that shaped it.

Enterprise BI and Assumed Governance

Early maturity frameworks emerged during the era of centralized Enterprise BI. Corporate IT owned the stack:

- Enterprise data warehouses

- Controlled semantic layers

- Governed reporting environments

- Certified KPI definitions

- Approved tools

Governance was not debated because control was structural. The benefits were that the organization knew who was using what, data definitions were defined at the corporate level, and the Center of Excellence ensured that development standards were followed.

But the system had friction. The weakness was not discipline. It was velocity. Create a ticket, and your new report will be added to the deployment queue for release by offshore twice a week. Testing and release cycles were anchored to promotion calendars. Business units waited months for insights needed in weeks. This was not the promised “business at the speed of thought.”

The maturity staircase flourished in this era because governance was assumed. IT controlled all roads in.

The Swing Toward Self-Serve

Then the pendulum started to swing. Cloud platforms lowered infrastructure barriers. Visualization tools democratized analytics. Departmental budgets enabled autonomy. With the new tools, anyone could create their own dashboard.

Business didn’t want governance. Business wanted answers. And increasingly, it had the funding to acquire tools without enterprise mediation. Corporate IT resisted, but no one wins at whack-a-mole. The economics were decentralized.

Self-service analytics proliferated. Dashboards multiplied. Data extracts spread. Innovation accelerated. That was all great. But something else scaled as well.

Craft Analytics

Self-service created heroes of individual practitioners. Self-serve analytics multiplied craft analytics. Early self-serve maturity looked less like institutional capability and more like individual craftsmanship. Smart business analysts taught themselves the tools and did what made sense. They worked in vacuums.

Analysts built end-to-end pipelines. Business logic lived in personal SQL scripts and under the covers of individual reports. Transformations hid inside spreadsheets. Testing – in air quotes – was manual. Documentation… Uhm, documentation?

If the individual was strong, the analytics were strong. If they left, the capability left with them. I’ve even seen vacation schedules be risk factors.

Organizations believed they were climbing the maturity staircase. Instead of scaling analytics, they were scaling analysts.

Individual craftsmanship was admired, but craftsmanship does not industrialize decision-making. This is where the staircase began to fail.

Where Analytics Maturity Model Breaks Down

The maturity model began to fracture precisely at the moment predictive analytics entered the enterprise. Forecasting engines emerged. Optimization models entered planning cycles. But governance for these new processes lagged.

Definitions diverged across departments. Shadow KPI calculations proliferated. Finance and HR ran parallel calculations for productivity. International reconciliation was a nightmare. Enterprise platforms coexisted with spreadsheet shadow systems calculating “official” KPIs off-grid.

Predictive tooling matured quickly, but version control, testing frameworks, and deployment governance lagged. The staircase assumed linear progression. Reality delivered asymmetrical maturity. Sophisticated analytics rested on artisanal delivery foundations.

Organizations reached predictive capability without operational readiness. They mistook experimentation and individual successes for maturity. The weakness of the maturity staircase was never its logic — it was its assumptions. It assumed that as analytic sophistication increased, governance would follow. That predictive capability implied operationalization. That enterprise adoption would scale in parallel with tooling.

The industry reached a false summit. We could brag about predictive analytics, but the organizational maturity to support them hadn’t followed. Which leads to the uncomfortable reframing:

Predictive analytics began appearing across enterprises, but operational discipline lagged behind sophistication.

Industrialized Analytics as the New Maturity Standard

The Pendulum Returns – Governed Self-Serve

We’re witnessing the pendulum swing again – not backward toward centralized Enterprise BI, but forward toward governed self-serve. OK, so it’s an awkward analogy. Maybe, “what’s old is new again,” fits more accurately what we’re seeing. Until recently, governed self-serve was an oxymoron.

Modern enterprises don’t want to suppress business agility. They want to industrialize it. The challenge is allowing self-service while maintaining a disciplined approach. This new model combines autonomy with structural discipline:

- Federated governance frameworks

- Shared semantic layers

- Data product ownership

- Enterprise observability

- Delivery lifecycle standards

Agility is preserved. Structural integrity is restored. The best of both worlds.

Industrialization – The New Definition of Maturity

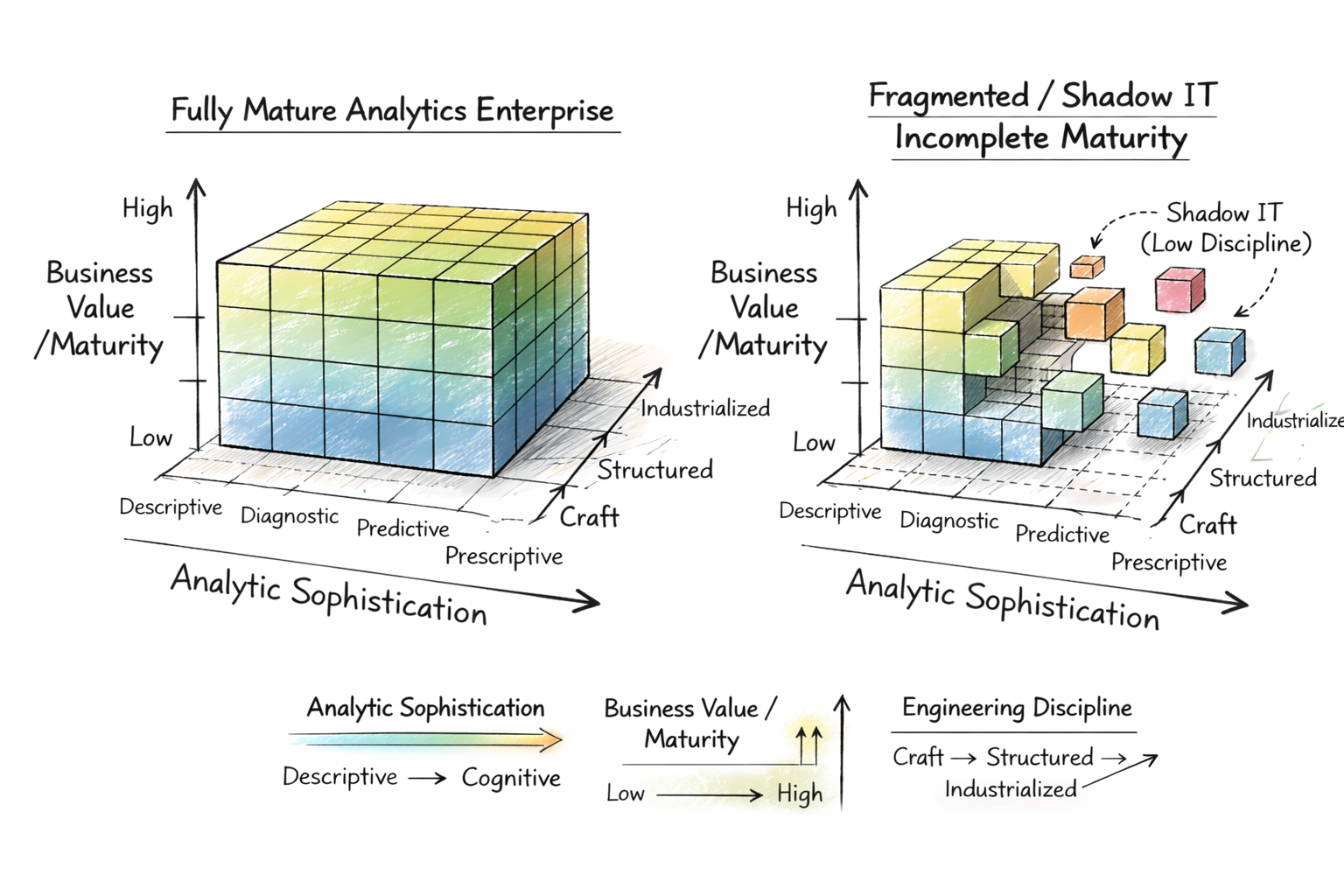

To understand this shift, maturity must be visualized differently – not as a staircase, but as a cube.

Three dimensions define modern analytics maturity:

- Analytic Sophistication

- Business Value / Maturity

- Engineering Discipline

The staircase measured only the first. Industrialization introduces the third – discipline – as structural depth. A fully mature enterprise is not one with the most advanced models. It is the one where analytics delivery is engineered, governed, observable, and repeatable across the organization.

Maturity is no longer what analytics can do; it’s about how analytics is built, governed, and sustained.

The Engine of Industrialization

Industrial discipline does not emerge through policy alone. It is powered by an operational infrastructure:

Telemetry → Observability → Operationalization

Telemetry inventories assets, pipelines, and dependencies. Observability interprets that telemetry, revealing performance behavior, data quality, and failure patterns. Operationalization acts on those observations, enforcing testing, automating deployments, and governing delivery pipelines.

The governing principle is simple: You cannot govern what you cannot observe. Without observability, governance is aspirational. With it, governance becomes executable.

A fully mature analytics enterprise exhibits:

- Version-controlled analytics assets.

- CI/CD deployment pipelines.

- Governed semantic layers.

- Model monitoring and observability.

- Traceable lineage.

- Enterprise delivery standards.

This is not advanced analytics as a one-off. This is not just the prototype. It is analytics as infrastructure.

The Analytics Development Life Cycle

This industrialization manifests through the Analytics Development Life Cycle – the engineering discipline that analytics learned from software development. The emergence of the Analytics Development Life Cycle (ADLC) signals industrialization. It introduces engineering rigor into analytics delivery:

- Intake and prioritization backlogs.

- Requirements engineering.

- Data modeling standards.

- Automated testing.

- Deployment orchestration.

- Monitoring and incident response.

Analytics is no longer like that $10 loaf of bread you buy at the artisanal bakery. Analytics output becomes a sustainable, consistent, scalable product.

Measuring Enterprise Analytics Maturity

This reframing forces a different set of maturity questions. Legacy assessments focused on capability: Do we have predictive analytics? How far have we moved from spreadsheets? How advanced are our models? Sophistication was treated as the proxy for progress.

Industrialized maturity evaluates structural readiness. It asks whether analytics is engineered to scale, governed to endure, and operationalized to deliver value beyond individual artifacts. Version control, redeployable pipelines, traceable lineage, production observability, and enforceable governance are no longer one-offs

. They are the operating foundation of maturity.

Seen through this lens, the analytics maturity model was not wrong. It was incomplete. It measured what analytics could do, not how analytics was built, governed, and sustained. It emerged in an era of centralized enterprise control, fractured under the acceleration of self-serve adoption, and is now being redefined through industrialization.

The governance pendulum tells the story. Enterprise BI optimized for control. Self-serve analytics optimized for speed. Industrialized analytics is the synthesis – engineering agility without sacrificing coherence.

Analytics maturity, therefore, is not defined by capability alone. It is defined by the convergence of capability sophistication supported by operational discipline, governance penetration, and enterprise adoption. Only when these dimensions align with capabilities does analytics move from experimentation to infrastructure.

The staircase described aspiration. Industrialization defines sustainability. One mapped analytic possibility. The other determines whether analytics survives contact with production environments.

In this paradigm, analytics maturity is no longer a straight line from the bottom step to the top. It’s three-dimensional structural integrity – engineered, governed, and operationalized across the enterprise.

Predictive models built without engineering discipline aren’t maturity milestones – they’re prototypes waiting to fail under scale.